Hadoop to Snowflake Migration: Challenges, Best Practices, and Practical Guide

Moving data from Hadoop to Snowflake is quite the task, thanks to how different they are in architecture and how they handle data. In our guide, we’re diving into these challenges head-on. We’ll look at the key differences and what you need to think about strategically. The shift from Hadoop, with its traditional way of processing data, to Snowflake, a top-notch cloud data warehouse platform, comes with its own set of perks and considerations. We’re going to break down the core differences in their architecture and data processing languages, which are pivotal to understanding the migration process.

Plus, we’re not just talking tech here. We’ll tackle the business side of things too – like how much it’s going to cost, managing your data properly, and keeping the business running smoothly during the switch. Our aim is to give you a crystal-clear picture of these challenges. We want to arm you with the knowledge you need for a smooth and successful move from Hadoop to Snowflake.

Lastly, we’ll discuss how Datafold’s powerful AI-driven migration approach makes it faster, more accurate, and more cost-effective than traditional methods. With automated SQL translation and data validation, Datafold minimizes the strain on data teams and eliminates lengthy timelines and high costs typical of in-house or outsourced migrations.

This lets you complete full-cycle migration with precision–and often in a matter of weeks or months, not years–so your team can focus on delivering high-quality data to the business. If you’d like to learn more about the Datafold Migration Agent, please read about it here.

Common Hadoop to Snowflake migration challenges

Moving from Hadoop to Snowflake requires getting a grip on the technical challenges to produce a smooth transition. To begin, let’s talk about the intricate differences in architecture and data processing capabilities between the two platforms. Getting a handle on these technical details is necessary to craft an effective migration strategy that keeps hiccups to a minimum and really gets the most out of Snowflake’s capabilities.

As you shift from Hadoop to Snowflake, you’ll need to adapt your current data workflows and processes to fit Snowflake’s unique cloud setup. It’s necessary for businesses to keep their data sets intact and consistent during this move. Doing so is key to really tapping into what Snowflake’s cloud-native features have to offer. If you maintain high data quality, you’ll achieve better data storage, more efficient processing, and seamless data retrieval in your cloud environment.

Architecture differences between Hadoop and Snowflake

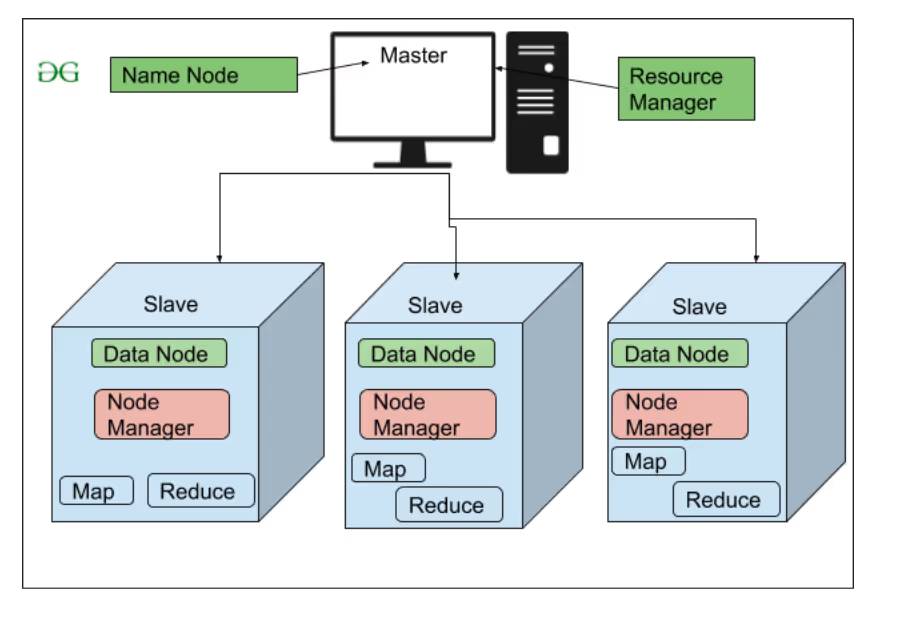

Hadoop and Snowflake are like apples and oranges when it comes to managing and processing data. Hadoop focuses on its distributed file system and MapReduce processing. It’s built to scale across various machines, but managing it can get pretty complex. Its HDFS (Hadoop Distributed File System) is great for dealing with large volumes of unstructured data. However, you’ll need extra tools to use the data for analytics purposes.

Snowflake’s setup is built for the cloud from the ground up, which lets it split up storage and computing. The separation of these two components means it can scale up or down really easily and adapt as needed. In everyday terms, this makes handling different kinds of workloads fat more efficient and reduces management overhead. All this positions Snowflake as a more streamlined choice for cloud-based data warehousing and analytics.

Hadoop’s architecture explained

Hadoop’s architecture, known for its ability to handle big data across distributed systems. It’s like a powerhouse when it comes to churning through huge, unstructured datasets. But, it’s not all smooth sailing – managing it can get pretty complex, and shifting to cloud-based tech can be a bit of a hurdle. Hadoop stands out because of its modular, cluster-based setup, where data processing and storage are spread out over lots of different nodes. For businesses that really care about keeping their data compatible and moving it around efficiently, these are important points to think about when moving to Snowflake.

Scalability: Hadoop handles growing data volumes by adding more nodes to the cluster. We call this horizontal scaling. For a lot of businesses, this is a cost-effective way to handle massive amounts of data. But, it’s not without its headaches – it brings a whole lot of complexity in managing those clusters and keeping the network communication smooth. And as that cluster gets bigger, keeping everything running smoothly and stable gets trickier.

Performance challenges: Hadoop’s performance is highly dependent on how effectively its ecosystem (including HDFS and MapReduce) is managed. When you’re dealing with data on a large scale, especially in batch mode, it can take a while, and you might not get the speed you need for real-time analytics. Getting Hadoop tuned just right for peak performance is pretty complex and usually needs some serious tech know-how.

Integration with modern technologies: Hadoop was a game-changer when it first came out in the mid-2000s, but it’s had its share of struggles fitting in with the newer, cloud-native architectures. Its design is really focused on batch processing, not so much on real-time analytics. As a result, it doesn’t always mesh well with today’s fast-paced, flexible data environments.

Snowflake’s architecture explained

Snowflake’s architecture is designed as a cloud data warehouse. Its separate storage and computing resources means it’s engineered for enhanced efficiency and flexibility. You can dial its computing power up or down depending on what you need at the moment, which is great for not wasting resources. Plus, Snowflake is optimized for storing data – it cuts down on duplicates, so you end up using less space and saving money compared to Hadoop. All in all, Snowflake is a solid choice for managing big data. It’s got the edge in scalability and performance, especially when you stack it up against Hadoop’s way of mixing data processing and storage.