Understanding data quality issues and solutions

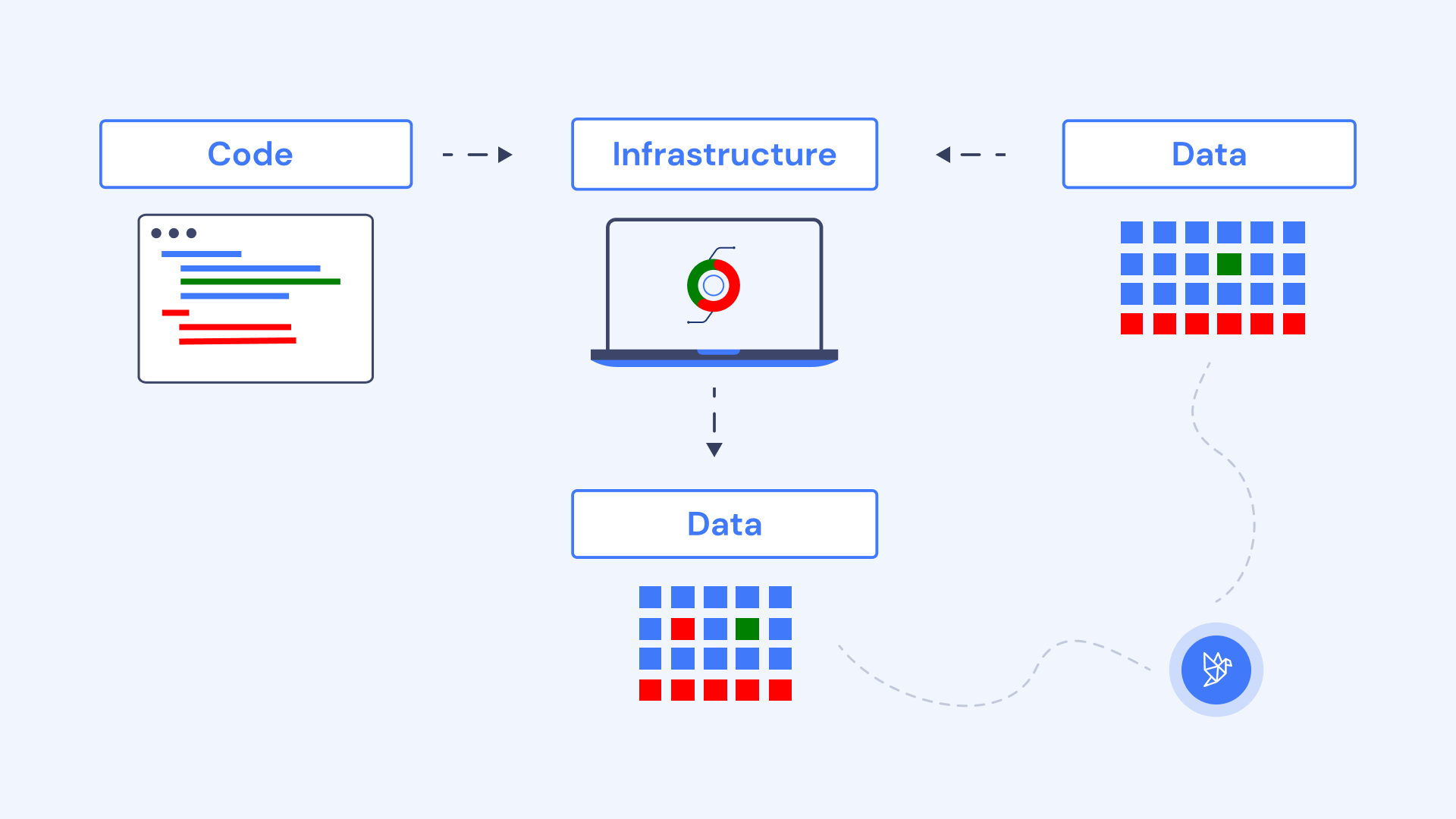

Data quality issues are caused by changes in the code, data and infrastructure. Compare solutions to test code, observe data and monitor infrastructure.

Data quality issues are problems with analytical data that affect data accuracy, completeness, timeliness, or consistency. Data quality issues are detrimental to the business if incorrect data is used to make important decisions, human or machine alike.

When it comes to data quality issues, it’s hard to identify a single culprit. Data quality issues can be introduced in any component of the data pipeline: ingestion, storage, transformation, orchestration or consumption.

In this article, we explore how data quality issues are caused by changes in the code, data and infrastructure. We provide examples across two types of data quality issues: production-specific and development-specific data quality issues. We also compare solutions to test code, observe data and monitor infrastructure.

Types of data quality issues

There are two main types of data quality issues depending on the environment where they are introduced: production-specific and development-specific data quality issues.

Production-specific data quality issues

All data quality issues, if they’re not addressed earlier in the process, eventually become production issues. Production-specific data quality issues arise in production and can’t be detected ahead of time.

Development-specific data quality issues

All data is produced, moved, and processed by software expressed in code, written in Java, Python, SQL, or some other language even if imputed by a human. When data engineers or analytics engineers write or modify code that processes data, there is always a chance for a data problem to be introduced. Development-specific data quality issues can be caught before they affect production data.

Next, we’ll explore causes for each type of data quality issue and how to prevent issues from impacting production data and, ultimately, the business.

Causes of production-specific data quality issues

The main causes of production-specific data quality issues are:

- Source and third-party data changes.

- Infrastructure failures.

Source and third-party data changes

The most common cause of production-specific data quality issues is the ingestion of third-party data sources. This is because you have no control over the underlying data; it can be reliable at one moment but suddenly change without notice. These issues may also be difficult to fix immediately, thereby persisting for some time without resolution.

Let’s explore concrete examples of data quality issues that can stem from third-party data sources: file formats changing, malformed data values and schema changes.

File formats changing without warning

Consider a Python data pipeline that ingests CSV files that are gzipped, given the .gz extension, and sourced from a third-party SFTP server.

`import gzip

with gzip.open(‘third-party-files/daily_extract.gz’, ‘rb’) as f: file_content = f.read()

` Unfortunately, this sort of approach will immediately break when that third party decides—intentionally or not—to stop gzipping their files. The code above will raise an exception that may halt the processing logic and cause one of the most common data quality issues: you won’t have any new data down the pipe.

Malformed data values and schema changes

Third-party data sources can leave you with malformed data values or the addition or removal of data points without warning. Malformed data values can be difficult to catch and address, as each data type and normal value range can vary widely depending on the data source.

Let’s take the example of an order file from a third-party vendor that sends order files that are ingested by a data pipeline. This is the first row of a normal file:

order_id | product_id | product_desc | order_quantity | amount 1234 | 5678 | widget | 1 | 10.00

However, another file comes in later on, and it differs without warning. It looks like this:

order_id | product_description | order_quantity | amount 1234 | widget | 1 | 1e9+9

It’s common for columns to be renamed unwittingly from product_desc to product_description*. *In addition, however, the amount field suddenly shows in scientific notation due to a source data type error, and the product_id field has disappeared entirely.

When undetected, these column name changes can create pipeline breakages. Even worse, a pipeline may ingest the data incorrectly, resulting in a widespread population of NULL values. In many cases, these issues may go undetected for weeks or months. The same is true for malformed data points. Without in-depth data observability and alerting, a data team may be relying on pure luck to be made aware of such changes.

Data infrastructure failures

Even if all the underlying data is correct, processing it at scale requires software running reliably. While data-processing technologies vary greatly in their robustness (for example, one could argue that Snowflake and Databricks are far more robust than Hive or Hadoop for SQL workflows), issues can still occur:

- Data warehouse queries can fail or suddenly take orders of magnitude more time.

- Spot compute instances can die underneath the clusters.

- Variations in user activity can sporadically clog computational resources.

Solutions to detect data quality issues in production

Data observability tools can help to detect data quality issues (schema changes, distribution changes, stale data…) that arise from data changes and infrastructure failures in production environments. These changes and failures are hard to predict during the development process and require observing production data. Popular data observability solutions include Metaplane, Monte Carlo, Anomalo, Lightup, Soda and BigEye.

Data observability tools are more useful to find the root cause issue than infrastructure monitoring tools such as Datadog and New Relic. However, you can use both solutions to inform each other, like surfacing data quality issues arising from infrastructure failures.

Causes of development-specific data quality issues

Once a data quality issue reaches production, a business can encounter substantial costs or risks, including: erroneous executive dashboards, incorrect customer information, erosion of stakeholder trust and reputational risk. At Datafold, we believe that proactively catching data quality issues before they reach production is the most effective way to ensure reliable data and increase data productivity.

Since all data is processed by software, most data issues are, in fact, bugs in the data-processing software, such as SQL code used for data transformations.

The main causes of development-specific data quality issues are:

- Incorrect implementation of technology.

- Misunderstood or misapplied business requirements.

- Unaccounted downstream dependencies.

Incorrect implementation of technology

Some data transformation bugs are introduced from something as simple as the incorrect use of a particular technology. This is a code implementation problem.

Let’s consider a column that holds a STRING data type that needs to be parsed to obtain a particular value. For example, we have an order_information from which we need to extract a date. What if the incorrect implementation simply misses the correct substring location by one character?

`df = spark.createDataFrame( [(‘2022/01/02 order placed.’),], [‘order_information’]) df.select( substring(df.‘order_information’, 2, 10).alias(‘order_date’) ).collect()

[Row(‘order_date’=‘022/01/02’)] ` We now have a malformed order_date that truncates the year and is not valid. Data transformation bugs, including those caused by data type mismatches and schema inaccuracies caused by incorrect code implementation, are very common.

Misapplied or misunderstood requirements

On the other end is the correct implementation of technology (correct code), but a misunderstanding of requirements. For example, consider a CASE statement in an SQL transformation:

SELECT DISTINCT customer_id, customer_name, CASE WHEN customer_status = 1 THEN 'active' ELSE 'inactive' END as customer_status FROM default.customer_orders WHERE order_date >= CAST(current_timestamp() as DATE)

In the above example, the data transformation uses a CASE statement to determine the status of a customer. Data quality issues frequently arise when logic such as this is simply misinterpreted. For example, it’s possible that a customer_status value of 1 means the customer has deleted their account.

The only problem with the code is that it’s doing exactly the wrong thing due to human error or misunderstanding. This leads to —you guessed it— data quality issues.

Unaccounted downstream dependencies

You did everything right, thoroughly mapped out business requirements, clarified definitions, and thoughtfully expressed the logic in SQL… but still caused a massive data quality incident! Changing the SQL definition of customer_status, while thoroughly tested in the context of the data pipeline, caused marketing to send promotions to the wrong people for two months until they noticed.

Preventing undesired impact on downstream data comes through a clear understanding of data lineage and communication of business requirements across all data producers and consumers.

Solutions to prevent data quality issues during deployment

Data testing tools can help to catch data quality issues proactively, before they reach production, by testing data changes introduced from code that processes data. Popular solutions to test and validate data changes include dbt tests during development, and Datafold’s data diffing during your continuous integration (CI) process.

Testing assertions

Assertions check that a dataset conforms to a given expectation. For example, column customer_id should not have any NULL values. Or column customer_status should only have values in the list: [“free_trial”, “paying”, “churned”]. Tools for implementing data assertions include: dbt tests (if you express data transformations in dbt) and Great Expectations.

Assertions can be helpful in codifying and validating many important assumptions about the data. However, the major limitation of assertions is the need to write and maintain lots of them for meaningful test coverage, which is unrealistic for the scale of any data platform. Therefore, assertions should typically be applied only to the most important data assets and columns.

Comparing development and production data

Datafold compare datasets in or across databases. Just like how git diff highlights changes in the source code, Datafold’s data diffing visualizes all changes in the resulting data by comparing development and production data. It can be very helpful in proactively catching data quality issues when transforming or migrating data.

By implementing Datafold directly in your CI process, you can see exactly how your code changes will potentially change the data in your production environment. Datafold’s rich UI allows data teams to investigate at the value-level how, say for example, a development version of DIM_ORGS and a production version differ.

By plugging directly into your CI process, whenever you open up a pull request (PR) for your dbt project or other data transformation tooling, Datafold automatically adds a comment summarizing the data differences between your production and PR branch environments. Because of Datafold’s robust column-level lineage, the Datafold PR comment also lists out downstream tables, dbt models, and data app assets (e.g., BI dashboards, reverse ETL sync) that will be changed as a result of your code change being merged in to production.

Armed with the value-level data differences and impact analysis provided by Datafold, data and analytics engineers can ship code changes with confidence and prevent data quality issues from ever entering their production environment. Data diffing also captures all data changes, including “unknown unknowns” that cannot be caught with traditional dbt assertion tests or unit tests. (As our solutions engineer, Leo Folsom says, “There is no generic dbt test that will tell me if a

Armed with the value-level data differences and impact analysis provided by Datafold, data and analytics engineers can ship code changes with confidence and prevent data quality issues from ever entering their production environment. Data diffing also captures all data changes, including “unknown unknowns” that cannot be caught with traditional dbt assertion tests or unit tests. (As our solutions engineer, Leo Folsom says, “There is no generic dbt test that will tell me if a first_name value changed from ‘Leo’ to ‘Leon’, whereas Datafold will always catch that.)

As we like to say at Datafold, your data should naturally be changing over time. But data practitioners deserve to have complete transparency and control over how that data is changing, with the power of a data diff.

Understanding data lineage

It’s important to surface how data will be impacted by code updates to specific data tables, but there is a world of downstream data assets that could be broken by those updates.

It’s common across data catalogs, data observability tools ,and data testing tools to provide some sort of data lineage feature. Data quality and catalog tooling use data lineage to spread data knowledge across the organization, data observability tools use it to do root cause analysis while data testing tools use it to prevent data quality issues.

For example, column-level lineage can be used to visualize column-level dependencies between tables, columns, and data applications to ensure that all downstream impacts are accounted for.

Conclusion

We’ve seen how data quality issues can arise from changes in the code, data and infrastructure. Production-specific data quality issues are primarily caused by unexpected changes in the data and infrastructure failures. Development-specific data quality issues are primarily caused by untested code changes.

You can use a combination of data testing tools to prevent data quality issues introduced by code changes, data observability tools to catch production-specific data issues and software monitoring tools to detect infrastructure failures.