Hadoop to Databricks Migration: Challenges, Best Practices, and Practical Guide

Explore key strategies for migrating from Hadoop to Databricks, covering challenges, best practices, and a step-by-step practical migration guide.

If you’re planning a Hadoop migration to Databricks, use this guide to simplify the transition. We shed light on moving from Hadoop’s complex ecosystem to Databricks’ streamlined, cloud-based analytics platform. We delve into key aspects such as architectural differences, analytics enhancements, and data processing improvements.

We’re going to talk about more than just the tech side of things and tackle key business tactics too, like getting everyone on board, keeping an eye on the budget, and making sure day-to-day operations run smoothly. Our guide zeros in on four key pillars for nailing that Hadoop migration: picking the right tools for the job, smart planning for moving your data, integrating everything seamlessly, and setting up strong data rules in Databricks. We’ve packed this guide with clear, actionable advice and best practices, all to help you steer through these hurdles for a transition that’s not just successful, but also smooth and efficient.

Lastly, Datafold’s powerful AI-driven migration approach makes it faster, more accurate, and more cost-effective than traditional methods. With automated SQL translation and data validation, Datafold minimizes the strain on data teams and eliminates lengthy timelines and high costs typical of in-house or outsourced migrations.

This lets you complete full-cycle migration with precision–and often in a matter of weeks or months, not years–so your team can focus on delivering high-quality data to the business. If you’d like to learn more about the Datafold Migration Agent, please read about it here.

Common Hadoop to Databricks migration challenges

In transitioning from Hadoop to Databricks, one of the significant technical challenges is adapting current Hadoop workloads to Databricks’ advanced analytics framework and managing data in a cloud-native environment. It’s possible to achieve this by reconfiguring and optimizing Hadoop workloads, which were originally designed for a distributed file system and batch processing, to leverage the real-time, in-memory processing capabilities of Databricks.

You’ll also have to think about managing data in a cloud-native space. The way it’s done in Databricks is vastly different from the way it works for Hadoop. It may sound overwhelming, but it’s totally doable. You’ll have to rework and fine-tune your Hadoop workloads, originally crafted for distributed file systems and batch processing, to really make the most of Databricks’ speedy, in-memory processing. This work involves carefully adjusting these workloads to effectively align with the new environment provided by Databricks.

To get started, we need to talk about architecture.

Architecture differences

Hadoop and Databricks have distinct architectural approaches, which influence their data processing and analytics capabilities.

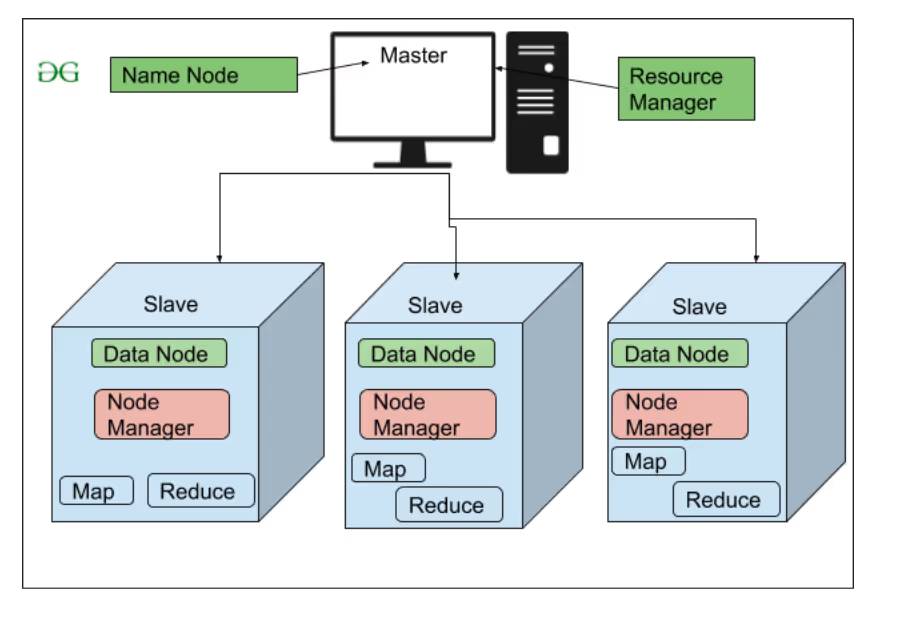

Hadoop, famed for its ability to handle vast volumes of data via its distributed file system and MapReduce batch processing, operates across multiple hardware systems. While its design is robust for large-scale data handling, it’s fairly complex. It demands hands-on setup and management of clusters and typically relies on extra tools for in-depth data analytics, striking a balance between power, complexity, and hosted on-premise hardware.

Databricks, on the other hand, offers a unified analytics platform built on top of Apache Spark. As a cloud-native solution, it simplifies the user experience by managing the complex underlying infrastructure. Its architecture facilitates automatic scaling and refined resource management. Hadoop’s approach leads to enhanced efficiency and accelerated processing of large-scale data, making it a robust yet user-friendly platform for big data analytics.

Hadoop’s architecture explained

Hadoop’s design is deeply rooted in the principles of distributed computing and big data handling. It’s crafted to manage enormous data sets across clustered systems efficiently. The system adopts a modular approach, dividing data storage and processing across several nodes. Its architecture not only enhances scalability but also ensures robust data handling in complex, distributed environments.

Data type mapping: Hadoop to Databricks

These are the type mappings between Hadoop and Databricks that you will encounter during migration. Silent type mismatches are one of the most common causes of post-migration data discrepancies. Validate every column, not just the row counts.

| Hadoop type | Databricks type | Notes |

|---|---|---|

TINYINT | TINYINT | Hive TINYINT is 1-byte signed integer |

SMALLINT | SMALLINT | |

INT | INT | |

BIGINT | BIGINT | |

FLOAT | FLOAT | 32-bit single precision |

DOUBLE | DOUBLE | |

DECIMAL(P,S) | DECIMAL(P,S) | |

STRING | STRING | Hive STRING is unbounded |

VARCHAR(N) | STRING | |

CHAR(N) | STRING | |

BOOLEAN | BOOLEAN | |

BINARY | BINARY | Variable-length binary data |

DATE | DATE | |

TIMESTAMP | TIMESTAMP_NTZ | |

ARRAY<T> | ARRAY<T> | |

MAP<K,V> | MAP<K,V> | |

STRUCT<...> | STRUCT<...> | |

UNIONTYPE<...> | VARIANT | No native union type; serialize as JSON/VARIANT |