Teradata to Snowflake Migration: Challenges, Best Practices & Practical Guide

Embarking on a migration from Teradata to Snowflake? Let’s make it simpler for you. Our guide breaks down the process, spotlighting the differences between Teradata’s on-premises system and Snowflake’s cloud-based approach. We explain what these differences mean for your data strategy, focusing on key areas like storage, processing, and scalability, as well as crucial business aspects like stakeholder alignment, budgeting, and minimizing downtime. Plus, we share four essential tips for a smooth migration, from choosing the right tech and prioritizing assets to efficient data validation.

Lastly, Datafold’s powerful AI-driven migration approach makes it faster, more accurate, and more cost-effective than traditional methods. With automated SQL translation and data validation, Datafold minimizes the strain on data teams and eliminates lengthy timelines and high costs typical of in-house or outsourced migrations.

This lets you complete full-cycle migration with precision–and often in a matter of weeks or months, not years–so your team can focus on delivering high-quality data to the business. If you’d like to learn more about the Datafold Migration Agent, please read about it here.

Common Teradata to Snowflake migration challenges

Technical challenges

In transitioning from Teradata to Snowflake, data engineers encounter a range of technical hurdles that demand careful attention. We look at two technical challenges, architecture, and SQL dialect differences, which can significantly impact the migration process. Understanding these core architectural differences is crucial for a successful migration, influencing everything from data distribution strategies to query performance and overall system scalability.

Architecture differences

While there are many differences between Teradata and Snowflake, a major architectural difference that affects the migration approach is the storage-compute coupling.

Teradata’s traditional, (typically) on-premises architecture contrasts sharply with Snowflake’s cloud-based data warehouse model. This shift from a physical infrastructure to a service-oriented architecture demands a fundamental change in managing data scalability, storage, and computing resources.

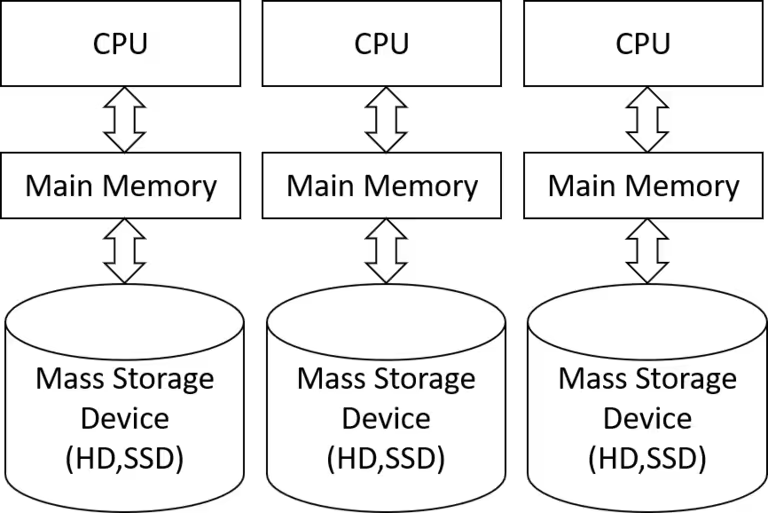

Specifically, Teradata employs a Massively Parallel Processing (MPP) architecture, distributing data across numerous nodes for parallel processing. This approach tightly integrates data storage with computational resources.

Teradata’s architecture explained

A Teradata deployment consists of a cluster of Access Module Processors (AMPs), which are the workhorses behind its data processing capabilities. Each AMP is deployed on a server instance, taking on a portion of both compute and storage responsibilities for the entire cluster. This setup means that storage and compute are coupled, with a specific segment of data being stored and processed on the same physical machine.

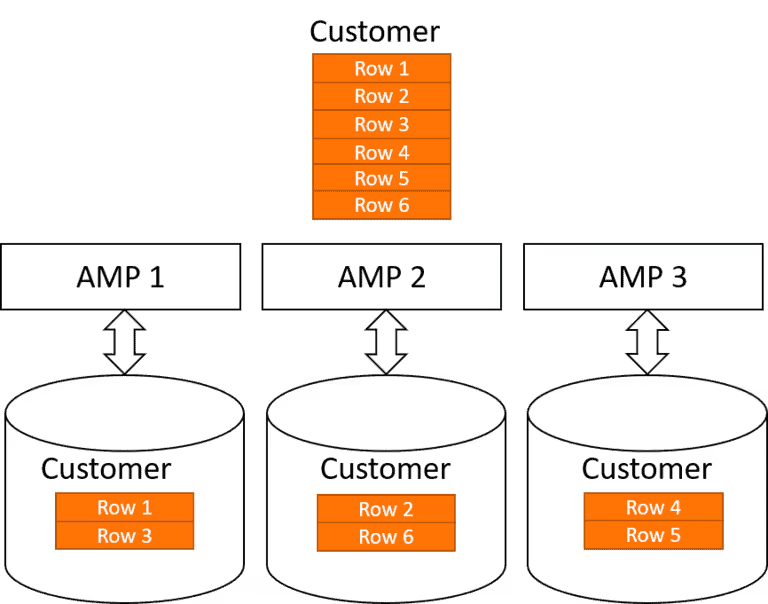

This coupling has profound implications for how Teradata manages data and query performance. Similar to other Massively Parallel Processing (MPP) systems like AWS Redshift, Teradata employs a hashing algorithm to distribute data across its AMPs:

This method is efficient but introduces three challenges for scalability and performance:

- Dependence on hashing key for query performance: Query performance depends on whether joins and filters in a query are done across the hashing key. Misalignment with the hashing key can lead to reduced query performance due to the additional overhead of data redistribution across AMPs.

- Scaling limitations: To scale storage or compute capabilities to process more queries, more nodes need to be added. This can be a costly operation that requires data redistribution among the new and existing nodes. Though the introduction of Teradata VantageCloud allows for compute-only clusters, they still depend on a Primary Cluster for storage. This dependency can quickly become a bottleneck, limiting scalability.

- Elasticity constraints in compute demand: The architecture of Teradata shows limited flexibility in scaling up or down in response to fluctuating business demands for query processing. Since a cluster storing data cannot be easily adjusted, this often leads to either underutilized resources or performance bottlenecks, impacting cost-efficiency.

Snowflake’s architecture explained

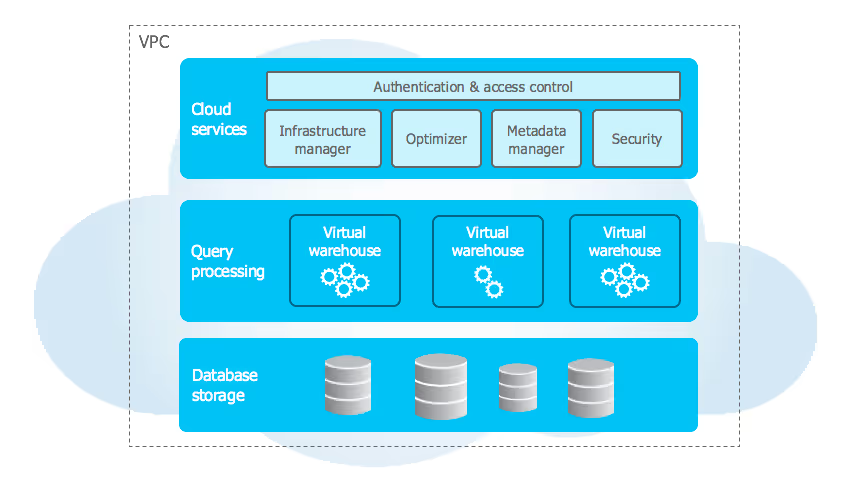

By contrast, Snowflake’s cloud-native, multi-cluster, shared data architecture separates compute and storage functions. This separation allows for more flexible scaling and efficient resource utilization.

Because Snowflake’s storage and compute layers are decoupled, either layer can be scaled independently and on demand. You can dynamically adjust compute resources; more virtual warehouses can be spun up in the morning when data analysts refresh dashboards and then scaled down overnight when data use is low.

This adaptability ensures high efficiency and a better match with varying business demands for data processing. Furthermore, managing these operations is user-friendly, with straightforward controls accessible via an API or UI, requiring minimal specialized knowledge.

Benefits of migration to Snowflake

-

Reduced DevOps effort: Migrating to Snowflake significantly lessens the workload associated with DevOps. Unlike Teradata, with its numerous components and/or on-premise solution that need regular maintenance and optimization, Snowflake abstracts away internal complexities. This abstraction translates to substantial time savings, as users spend far less time on system maintenance and performance optimization.

-

Shift from an ETL to ELT paradigm: Due to the scalability limitations of Teradata, teams commonly perform data filtering and transformations before loading into Teradata. This limits the range of data that can be queried by Teradata users. In contrast, Snowflake’s near-infinite scalability supports a more efficient ELT pattern where raw data can be ingested and efficiently stored in Snowflake to be used on demand.

-

Simplified and more flexible table layout: Efficient data management in Teradata requires carefully selecting table layouts, indices, and strategies for data distribution across nodes. Snowflake handles much of this complexity automatically. It offers a clustering option for performance optimization in handling extremely large tables, but without the intricate setup requirements seen in Teradata. This simplicity allows for a more streamlined data organization and management process.