SQL Server to Snowflake Migration: Challenges, Best Practices, and Practical Guide

Discover the key challenges, best practices, and a practical guide for migrating from SQL Server to Snowflake.

Embarking on a migration from SQL Server to Snowflake involves navigating technical intricacies and strategic planning. From understanding the fundamental differences in database architecture and SQL dialects to efficient data transfer strategies, we explain what these differences mean for your data strategy, focusing on key areas like storage, processing, and scalability, as well as crucial business aspects like stakeholder alignment, budgeting, and minimizing downtime.

Designed for data teams, this guide offers a comprehensive roadmap for a smooth migration, from choosing the right tech and prioritizing assets to efficient data validation. Datafold’s powerful AI-driven migration approach makes it faster, more accurate, and more cost-effective than traditional methods. With automated SQL translation and data validation, Datafold minimizes the strain on data teams and eliminates lengthy timelines and high costs typical of in-house or outsourced migrations.

This lets you complete full-cycle migration with precision–and often in a matter of weeks or months, not years–so your team can focus on delivering high-quality data to the business. If you’d like to learn more about the Datafold Migration Agent, please read about it here.

Common Microsoft SQL Server to Snowflake migration challenges

Technical challenges

The transition from SQL Server to Snowflake presents data teams with a range of technical hurdles that demand careful planning and strategic approach. This section explores two major challenges: the differences in database architecture and the distinct SQL dialects of each platform.

Architecture differences

SQL Server, a traditional relational database management system (RDBMS) often hosted on-premises, uses a shared-disk architecture. This means it relies on a single instance to manage the database, leading to potential bottlenecks in performance and scalability.

In contrast, Snowflake employs a unique multi-cluster, shared-data architecture. This architecture separates storage and compute functions, allowing for independent scaling of each, resulting in highly efficient resource utilization and performance. Snowflake’s design also offers near-infinite scalability and concurrency without compromising on performance.

Understanding these fundamental differences is key in planning a migration strategy, as it influences data modeling, performance tuning, and resource allocation.

SQL Server’s architecture explained

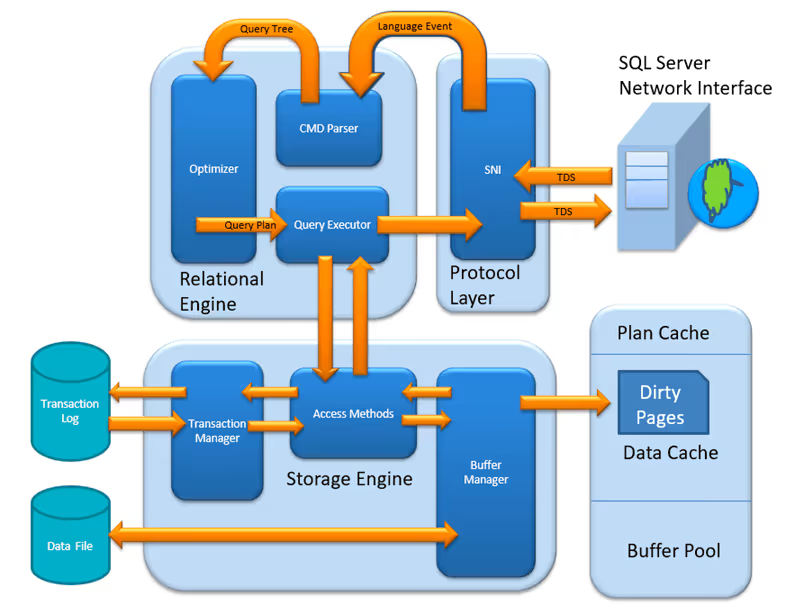

At its core, SQL Server operates with a three-layered architecture consisting of the Protocol Layer, Relational Engine, and Storage Engine.

The protocol layer serves as the front-end interface for clients to communicate with the SQL Server. It ensures secure connections and manages how data requests are sent and received.

For compute, SQL Server relies on its relational engine, which is responsible for parsing, interpreting, and executing SQL queries. It serves as the “brain” of SQL server by taking SQL queries from clients, figuring out the best way to execute them, and then actually performing the requested actions on the data.

The storage engine manages where and how data is stored on physical storage devices (like hard drives). It makes sure data is stored safely, handles multiple users accessing the same data at once, and keeps a log of changes for recovery.

In modern SQL Server deployments, you can decouple compute and storage by utilizing technologies like Azure SQL Database, SQL Server on Azure Virtual Machines, or SQL Server Big Data Clusters. These solutions allow you to independently scale your compute resources (CPU and memory) and storage capacity as needed. This separation of resources enhances flexibility, scalability, and cost-efficiency.

Snowflake’s architecture explained

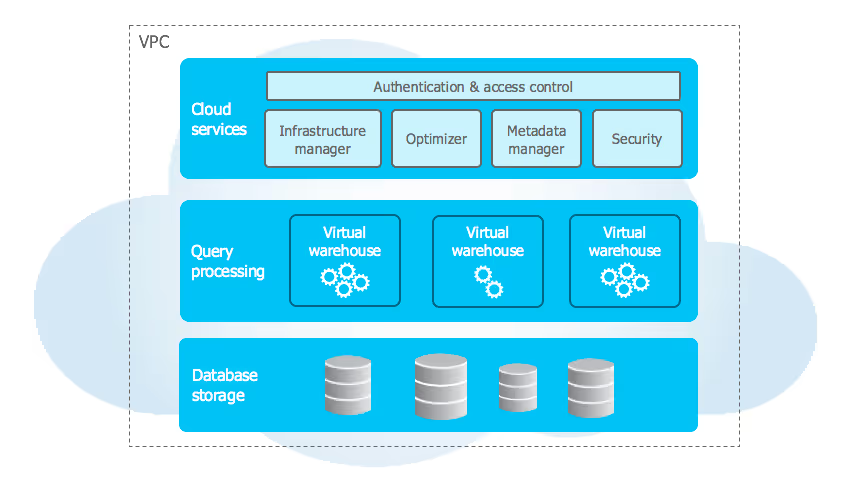

Snowflake’s architecture represents a significant departure from traditional database systems like SQL Server, with its innovative design focusing on separating data storage from compute resources. This architecture allows for a highly scalable and flexible data management environment.

In Snowflake, data storage is handled independently from compute processing. The data is stored on cloud-based storage services (such as Amazon S3, Azure Blob Storage, or Google Cloud Storage), allowing for virtually unlimited data storage capabilities. This data is stored in a columnar format, which is optimized for fast retrieval and efficient query performance, especially for analytical workloads. Snowflake manages the organization, file size, structure, compression, and metadata of the data storage layer, abstracting these details from the users.

On the compute side, Snowflake utilizes what it calls “virtual warehouses” to perform data processing tasks. A virtual warehouse is essentially a cluster of compute resources that can be scaled up or down independently of the storage. This means that you can increase or decrease your compute resources based on your current needs without affecting the underlying data. Each virtual warehouse operates independently, allowing multiple queries or jobs to run concurrently without contention.

Benefits of migration to Snowflake

Enhanced scalability and elasticity:

A key benefit of migrating from SQL Server to Snowflake is the substantial improvement in scalability and elasticity. Unlike SQL Server’s shared-disk architecture, Snowflake’s cloud architecture allows for dynamic scaling: you can adjust compute resources independently of storage, providing flexibility to handle workload variations. This means that during periods of increased demand, you can scale up resources quickly and efficiently, a stark contrast to SQL Server, where scaling often involves significant hardware investment and complex planning.

Cost-efficiency in resource utilization:

When comparing Snowflake with SQL Server, the cost-efficiency of Snowflake’s pay-as-you-go model stands out. In SQL Server, organizations often have to invest in resources to manage peak loads, leading to underutilization during off-peak periods. Snowflake’s model, which allows you to pay only for the storage and compute you use, presents a more economical approach, especially for businesses with fluctuating data processing needs.

Superior concurrency and multi-tenancy:

Snowflake’s ability to handle these diverse workloads without performance degradation is a considerable advantage over SQL Server. SQL Server, particularly in its standard configurations, can struggle with high concurrency levels, leading to performance bottlenecks. Snowflake’s architecture, with isolated compute clusters, enables efficient handling of multiple, simultaneous queries and jobs. This is especially beneficial for organizations where different teams or departments need to access and analyze data concurrently.

Data type mapping: SQL Server to Snowflake

These are the type mappings between SQL Server and Snowflake that you will encounter during migration. Silent type mismatches are one of the most common causes of post-migration data discrepancies. Validate every column, not just the row counts.

| SQL Server type | Snowflake type | Notes |

|---|---|---|

BIT | BOOLEAN | SQL Server BIT is 0/1; target BOOLEAN is TRUE/FALSE |

TINYINT | TINYINT | SQL Server TINYINT is unsigned 0-255 |

SMALLINT | SMALLINT | |

INT / INTEGER | INTEGER | |

BIGINT | BIGINT | |

DECIMAL(P,S) / NUMERIC(P,S) | NUMBER(P,S) | |

MONEY | NUMBER(19,4) | SQL Server MONEY is 8-byte fixed-precision currency |

SMALLMONEY | NUMBER(10,4) | |

FLOAT(N) | DOUBLE | FLOAT(1-24) = single precision; FLOAT(25-53) = double precision |

REAL | FLOAT | |

CHAR(N) / NCHAR(N) | CHAR(N) | |

VARCHAR(N) / NVARCHAR(N) | VARCHAR | |

VARCHAR(MAX) / NVARCHAR(MAX) | VARCHAR | SQL Server MAX = 2GB |

TEXT / NTEXT | VARCHAR | Deprecated in SQL Server; use VARCHAR(MAX) equivalent |

BINARY(N) | BINARY | |

VARBINARY(N) | BINARY | |

IMAGE | BINARY | Deprecated SQL Server binary LOB type |

UNIQUEIDENTIFIER | VARCHAR(36) | Store GUID as string; use UUID functions for generation |

DATE | DATE | |

TIME(N) | TIME | |

DATETIME | TIMESTAMP_NTZ(3) | SQL Server DATETIME has millisecond precision |

DATETIME2(N) | TIMESTAMP_NTZ(N) | |

SMALLDATETIME | TIMESTAMP_NTZ(0) | Minute precision only |

DATETIMEOFFSET(N) | TIMESTAMP_TZ(N) | |

XML | OBJECT | |

GEOGRAPHY / GEOMETRY | GEOGRAPHY | Verify coordinate projections |

HIERARCHYID | VARIANT | Serialize path as string (e.g., /1/2/3/) |

SQL_VARIANT | OBJECT | Dynamic type; cast to VARIANT/JSON or STRING |