.avif)

Folding Data #41

The next frontier for dbt

Last week, Tristan unveiled the biggest product update to dbt Core in a while that implements a vision for less fragile data systems that he announced 9 months ago.

TL;DR: dbt Core will support multiple projects within the same organization (the lack of explicit support of multi-project has been a massive pain at scale). While the actual multi-project support is still to be released, major prerequisites will be available by the end of the month.

dbt introduces the concept of interfaces to models (tables & views). When you build a new table, you can make it private (to your internal team) or public (intended for broader use).

Those interfaces will be controlled with Access and enforced with Contracts.

And they will be versioned.

Sounds familiar? Yes, these concepts continue the tradition of dbt borrowing and elegantly applying time-proven principles from software engineering.

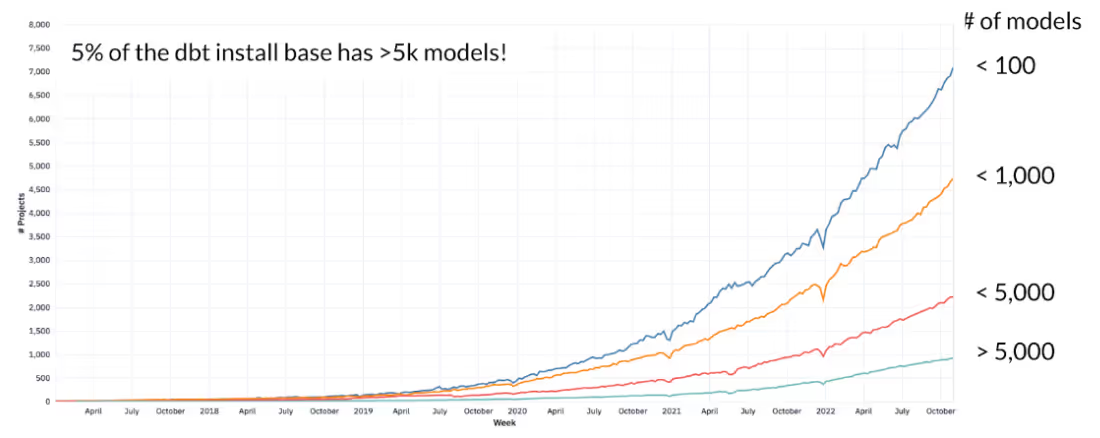

What's also remarkable is not only how fast the dbt adoption grows but how fast the complexity of the dbt projects explodes, as illustrated by the chart shared by Tristan:

The future of dbt in organizations is data mesh decentralized. And while modern warehouses taught us not to worry about the scale of the data, it's the complexity of the data and SQL code that are posing the most significant challenges to organizations scaling their data platforms.

What I've been working on

(As always, helping data teams ship faster with higher confidence by automating manual work).

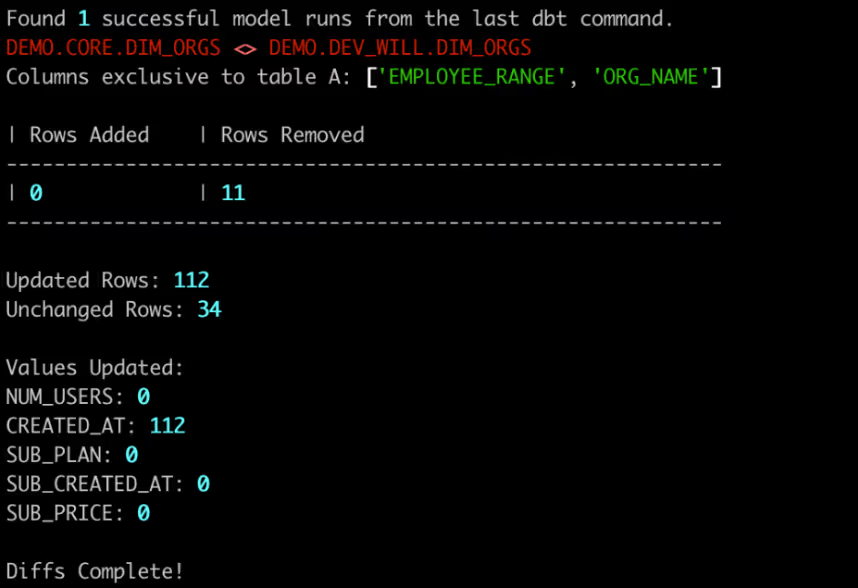

TL;DR: our open-source data-diff toolkit now integrates with dbt for seamless testing as you code and supports rich diffing visualizations through Datafold Cloud.

In "The day you stopped breaking your data", I argued that the most effective way to solve data quality issues is to prevent them from happening. For that, I suggested tools for robust change management, such as version control, CI/CD, and data diffing (that helps you understand the impact of a code change on the data before deploying it).

Automating code validation in CI is a powerful way to prevent deploys that cause data to break and stakeholders to lose trust in your work, and that's what Datafold has been doing for a few years now.

While having guardrails during the deployment and code review process helps prevent data incidents, with the help of our users, we learned that we could do something better: integrate testing into the development process itself.

In software development, they call it "shifting left" - running checks and tests as early in the development process as possible, ideally catching potential failures as you type the code.

Data-diff CLI + Datafold Cloud lets you diff local work against production to validate the changes and avoid introducing breaking changes before the code gets to the repo. It's free for individuals, and teams should consider getting on CI/CD.

If you're using dbt: pip install data-diff

This will let you run data-diff --dbt right after dbt run to diff your newly built local models against production to validate the code changes.

UPCOMING EVENT

Data Quality Meetup: Running dbt at scale

If your team has reached the point where you start feeling the challenges of running dbt at scale, I invite you to join our next virtual Data Quality Meetup. Joining us as our speakers and panelists are data leaders from Virgin Media O2, Capital One, Finn Auto, Airbyte and dbt Labs.

During this meetup, we will cover:

⚖️ How to navigate speed / data quality / compliance risks and trade-offs

⚙️ How to establish and scale great developer workflows

💎 Best practices for documentation, knowledge sharing and onboarding

👫 How to scale dbt beyond the data team in a large organization

💫 And more!

.avif)